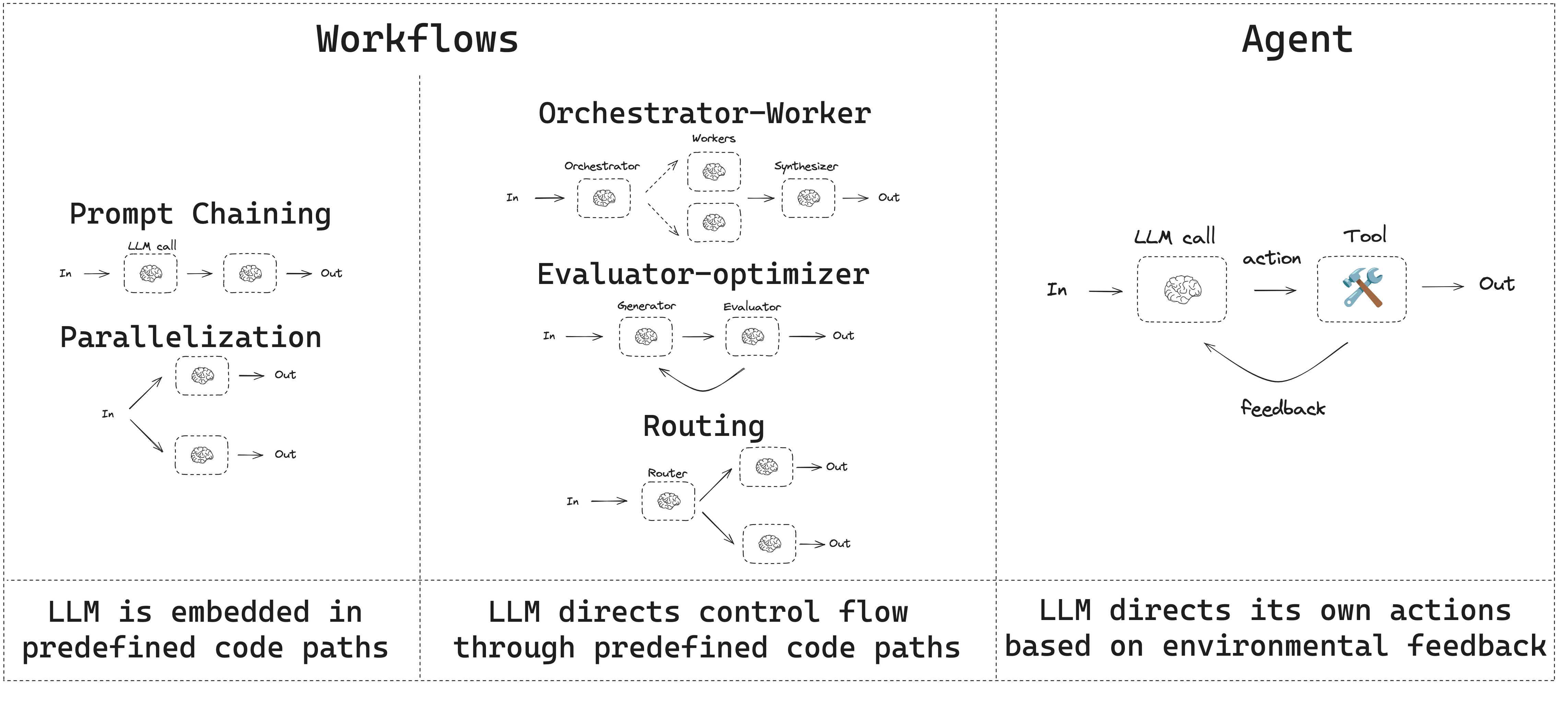

本指南回顾了常见的工作流和智能体模式。Documentation Index

Fetch the complete documentation index at: https://langchain-zh.cn/llms.txt

Use this file to discover all available pages before exploring further.

- 工作流具有预定的代码路径,旨在按特定顺序运行。

- 智能体是动态的,定义自己的流程和工具使用。

设置

要构建工作流或智能体,您可以使用支持结构化输出和工具调用的 任何聊天模型。以下示例使用 Anthropic:- 安装依赖项

npm install @langchain/langgraph @langchain/core

- 初始化 LLM:

import { ChatAnthropic } from "@langchain/anthropic";

const llm = new ChatAnthropic({

model: "claude-sonnet-4-6",

apiKey: "<your_anthropic_key>"

});

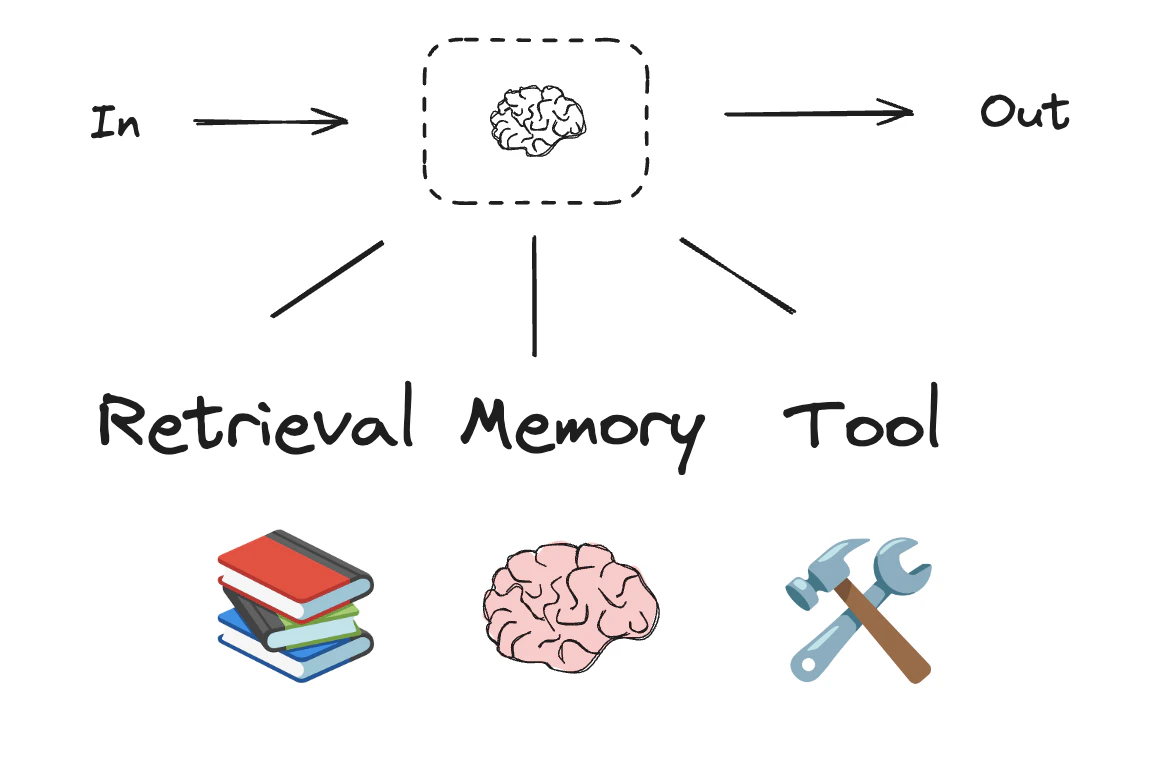

LLM 与增强功能

工作流和智能体系统基于 LLM 以及您添加的各种增强功能。工具调用、结构化输出 和 短期记忆 是定制 LLM 以满足您需求的几个选项。

import * as z from "zod";

import { tool } from "langchain";

// Schema for structured output

const SearchQuery = z.object({

search_query: z.string().describe("Query that is optimized web search."),

justification: z

.string()

.describe("Why this query is relevant to the user's request."),

});

// Augment the LLM with schema for structured output

const structuredLlm = llm.withStructuredOutput(SearchQuery);

// Invoke the augmented LLM

const output = await structuredLlm.invoke(

"How does Calcium CT score relate to high cholesterol?"

);

// Define a tool

const multiply = tool(

({ a, b }) => {

return a * b;

},

{

name: "multiply",

description: "Multiply two numbers",

schema: z.object({

a: z.number(),

b: z.number(),

}),

}

);

// Augment the LLM with tools

const llmWithTools = llm.bindTools([multiply]);

// Invoke the LLM with input that triggers the tool call

const msg = await llmWithTools.invoke("What is 2 times 3?");

// Get the tool call

console.log(msg.tool_calls);

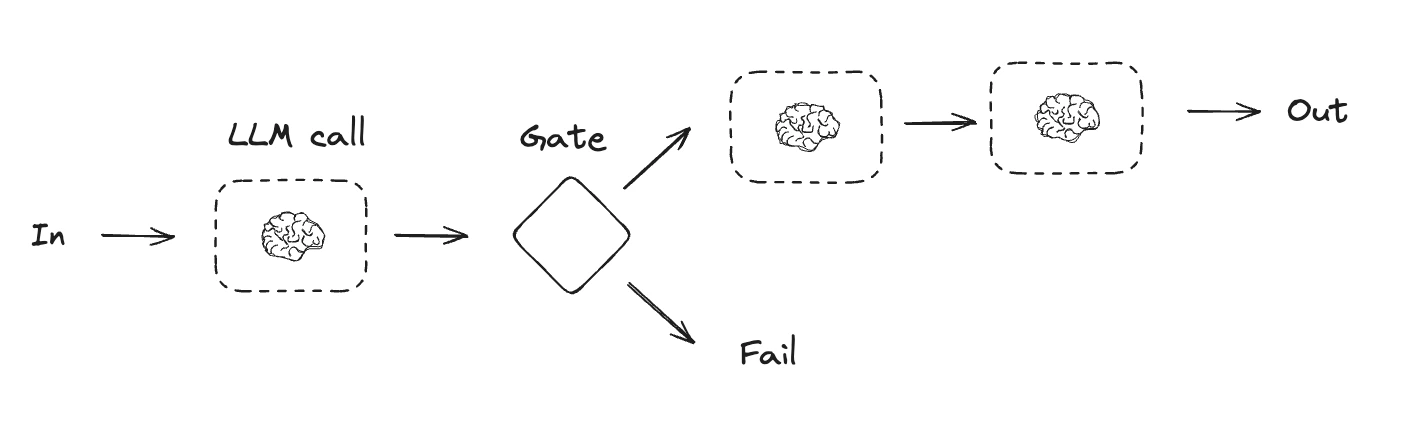

提示链

提示链是指每个 LLM 调用处理前一个调用的输出。它通常用于执行定义明确的任务,这些任务可以分解为更小的、可验证的步骤。一些示例包括:- 将文档翻译成不同的语言

- 验证生成内容的一致性

import { StateGraph, StateSchema, GraphNode, ConditionalEdgeRouter } from "@langchain/langgraph";

import { z } from "zod/v4";

// Graph state

const State = new StateSchema({

topic: z.string(),

joke: z.string(),

improvedJoke: z.string(),

finalJoke: z.string(),

});

// Define node functions

// First LLM call to generate initial joke

const generateJoke: GraphNode<typeof State> = async (state) => {

const msg = await llm.invoke(`Write a short joke about ${state.topic}`);

return { joke: msg.content };

};

// Gate function to check if the joke has a punchline

const checkPunchline: ConditionalEdgeRouter<typeof State, "improveJoke"> = (state) => {

// Simple check - does the joke contain "?" or "!"

if (state.joke?.includes("?") || state.joke?.includes("!")) {

return "Pass";

}

return "Fail";

};

// Second LLM call to improve the joke

const improveJoke: GraphNode<typeof State> = async (state) => {

const msg = await llm.invoke(

`Make this joke funnier by adding wordplay: ${state.joke}`

);

return { improvedJoke: msg.content };

};

// Third LLM call for final polish

const polishJoke: GraphNode<typeof State> = async (state) => {

const msg = await llm.invoke(

`Add a surprising twist to this joke: ${state.improvedJoke}`

);

return { finalJoke: msg.content };

};

// Build workflow

const chain = new StateGraph(State)

.addNode("generateJoke", generateJoke)

.addNode("improveJoke", improveJoke)

.addNode("polishJoke", polishJoke)

.addEdge("__start__", "generateJoke")

.addConditionalEdges("generateJoke", checkPunchline, {

Pass: "improveJoke",

Fail: "__end__"

})

.addEdge("improveJoke", "polishJoke")

.addEdge("polishJoke", "__end__")

.compile();

// Invoke

const state = await chain.invoke({ topic: "cats" });

console.log("Initial joke:");

console.log(state.joke);

console.log("\n--- --- ---\n");

if (state.improvedJoke !== undefined) {

console.log("Improved joke:");

console.log(state.improvedJoke);

console.log("\n--- --- ---\n");

console.log("Final joke:");

console.log(state.finalJoke);

} else {

console.log("Joke failed quality gate - no punchline detected!");

}

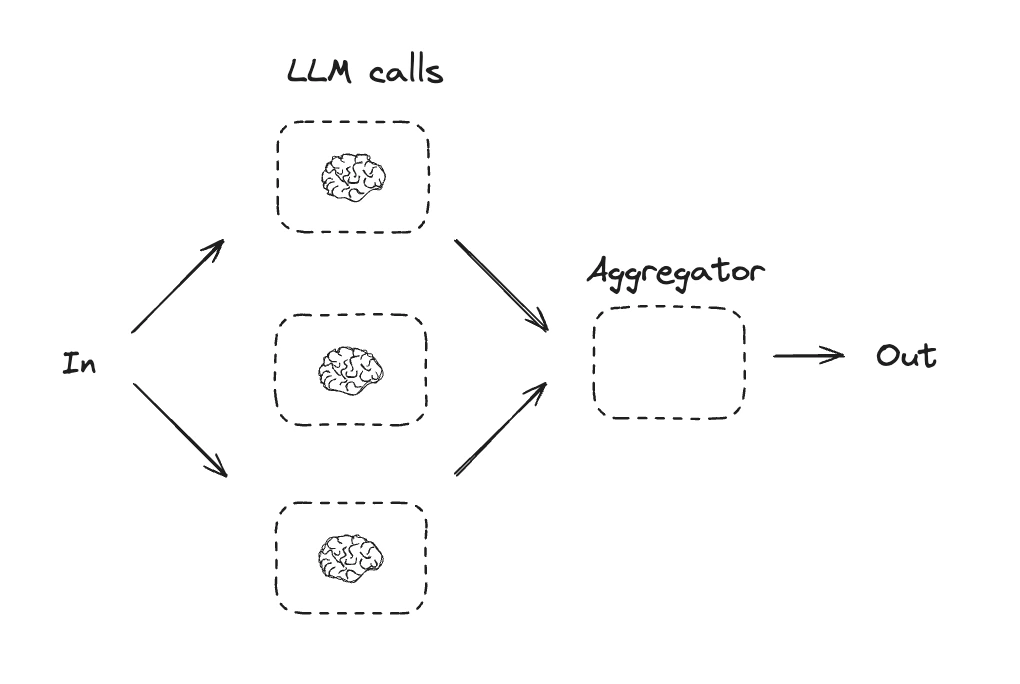

并行化

通过并行化,LLM 同时处理任务。这可以通过同时运行多个独立的子任务,或者多次运行同一任务以检查不同输出来完成。并行化通常用于:- 拆分子任务并并行运行它们,从而提高速度

- 多次运行任务以检查不同输出,从而提高置信度

- 运行一个处理文档关键词的子任务,以及另一个检查格式错误的子任务

- 多次运行一个根据标准(如引用数量、来源数量及来源质量)对文档进行评分的任务

import { StateGraph, StateSchema, GraphNode } from "@langchain/langgraph";

import * as z from "zod";

// Graph state

const State = new StateSchema({

topic: z.string(),

joke: z.string(),

story: z.string(),

poem: z.string(),

combinedOutput: z.string(),

});

// Nodes

// First LLM call to generate initial joke

const callLlm1: GraphNode<typeof State> = async (state) => {

const msg = await llm.invoke(`Write a joke about ${state.topic}`);

return { joke: msg.content };

};

// Second LLM call to generate story

const callLlm2: GraphNode<typeof State> = async (state) => {

const msg = await llm.invoke(`Write a story about ${state.topic}`);

return { story: msg.content };

};

// Third LLM call to generate poem

const callLlm3: GraphNode<typeof State> = async (state) => {

const msg = await llm.invoke(`Write a poem about ${state.topic}`);

return { poem: msg.content };

};

// Combine the joke, story and poem into a single output

const aggregator: GraphNode<typeof State> = async (state) => {

const combined = `Here's a story, joke, and poem about ${state.topic}!\n\n` +

`STORY:\n${state.story}\n\n` +

`JOKE:\n${state.joke}\n\n` +

`POEM:\n${state.poem}`;

return { combinedOutput: combined };

};

// Build workflow

const parallelWorkflow = new StateGraph(State)

.addNode("callLlm1", callLlm1)

.addNode("callLlm2", callLlm2)

.addNode("callLlm3", callLlm3)

.addNode("aggregator", aggregator)

.addEdge("__start__", "callLlm1")

.addEdge("__start__", "callLlm2")

.addEdge("__start__", "callLlm3")

.addEdge("callLlm1", "aggregator")

.addEdge("callLlm2", "aggregator")

.addEdge("callLlm3", "aggregator")

.addEdge("aggregator", "__end__")

.compile();

// Invoke

const result = await parallelWorkflow.invoke({ topic: "cats" });

console.log(result.combinedOutput);

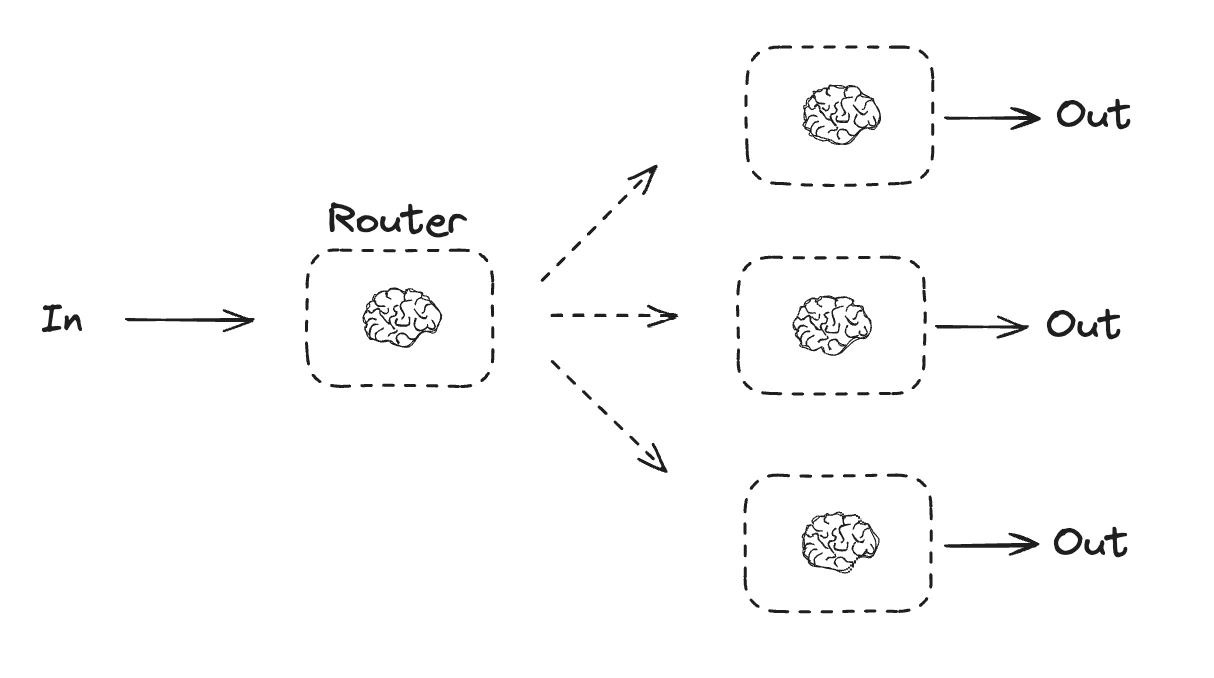

路由

路由工作流处理输入,然后将其定向到特定上下文的任务。这允许您为复杂任务定义专用流程。例如,构建用于回答产品相关问题的流程可能会先处理问题类型,然后将请求路由到定价、退款、退货等的特定流程。

import { StateGraph, StateSchema, GraphNode, ConditionalEdgeRouter } from "@langchain/langgraph";

import * as z from "zod";

// Schema for structured output to use as routing logic

const routeSchema = z.object({

step: z.enum(["poem", "story", "joke"]).describe(

"The next step in the routing process"

),

});

// Augment the LLM with schema for structured output

const router = llm.withStructuredOutput(routeSchema);

// Graph state

const State = new StateSchema({

input: z.string(),

decision: z.string(),

output: z.string(),

});

// Nodes

// Write a story

const llmCall1: GraphNode<typeof State> = async (state) => {

const result = await llm.invoke([{

role: "system",

content: "You are an expert storyteller.",

}, {

role: "user",

content: state.input

}]);

return { output: result.content };

};

// Write a joke

const llmCall2: GraphNode<typeof State> = async (state) => {

const result = await llm.invoke([{

role: "system",

content: "You are an expert comedian.",

}, {

role: "user",

content: state.input

}]);

return { output: result.content };

};

// Write a poem

const llmCall3: GraphNode<typeof State> = async (state) => {

const result = await llm.invoke([{

role: "system",

content: "You are an expert poet.",

}, {

role: "user",

content: state.input

}]);

return { output: result.content };

};

const llmCallRouter: GraphNode<typeof State> = async (state) => {

// Route the input to the appropriate node

const decision = await router.invoke([

{

role: "system",

content: "Route the input to story, joke, or poem based on the user's request."

},

{

role: "user",

content: state.input

},

]);

return { decision: decision.step };

};

// Conditional edge function to route to the appropriate node

const routeDecision: ConditionalEdgeRouter<typeof State, "llmCall1" | "llmCall2" | "llmCall3"> = (state) => {

// Return the node name you want to visit next

if (state.decision === "story") {

return "llmCall1";

} else if (state.decision === "joke") {

return "llmCall2";

} else {

return "llmCall3";

}

};

// Build workflow

const routerWorkflow = new StateGraph(State)

.addNode("llmCall1", llmCall1)

.addNode("llmCall2", llmCall2)

.addNode("llmCall3", llmCall3)

.addNode("llmCallRouter", llmCallRouter)

.addEdge("__start__", "llmCallRouter")

.addConditionalEdges(

"llmCallRouter",

routeDecision,

["llmCall1", "llmCall2", "llmCall3"],

)

.addEdge("llmCall1", "__end__")

.addEdge("llmCall2", "__end__")

.addEdge("llmCall3", "__end__")

.compile();

// Invoke

const state = await routerWorkflow.invoke({

input: "Write me a joke about cats"

});

console.log(state.output);

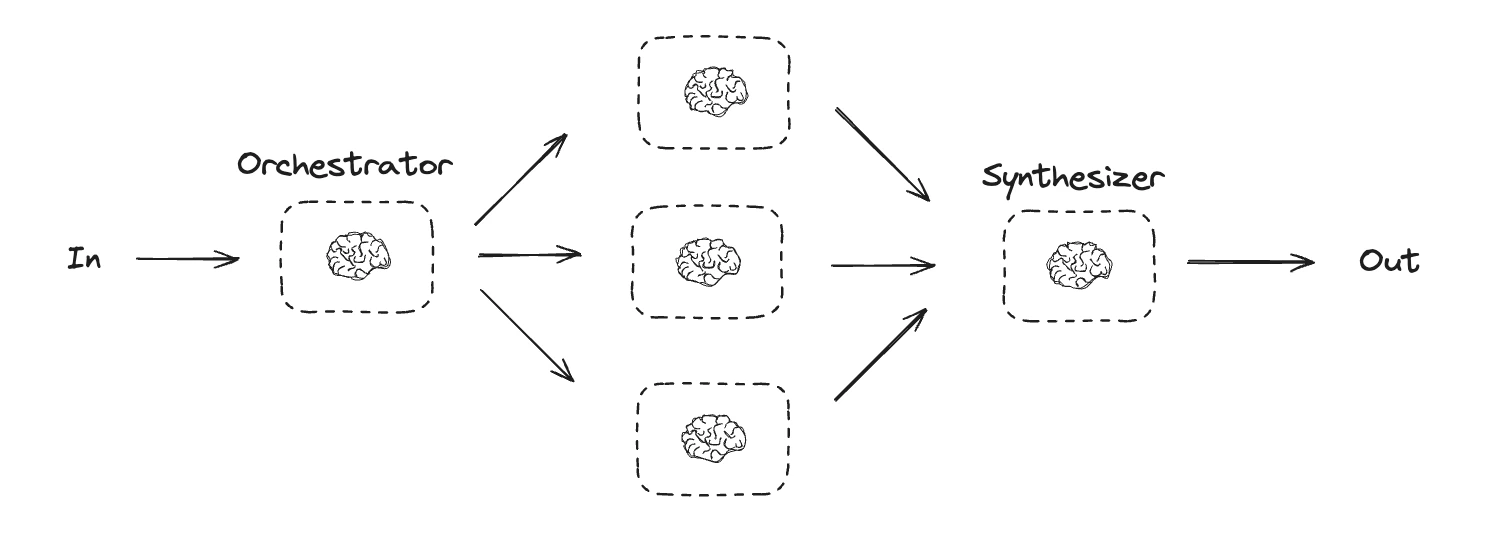

编排器 - 工作者

在编排器 - 工作者配置中,编排器:- 将任务分解为子任务

- 将子任务委托给工作者

- 将工作者输出综合为最终结果

type SectionSchema = {

name: string;

description: string;

}

type SectionsSchema = {

sections: SectionSchema[];

}

// Augment the LLM with schema for structured output

const planner = llm.withStructuredOutput(sectionsSchema);

在 LangGraph 中创建工作者

编排器 - 工作者工作流很常见,LangGraph 内置支持它们。Send API 允许您动态创建工作节点并向其发送特定输入。每个工作者都有自己的状态,所有工作者输出都写入共享状态键,编排器图可访问该键。这使编排器能够访问所有工作者输出,并将其综合为最终输出。下面的示例遍历章节列表,并使用 Send API 将每个章节发送给每个工作者。

import { StateGraph, StateSchema, ReducedValue, GraphNode, Send } from "@langchain/langgraph";

import * as z from "zod";

// Graph state

const State = new StateSchema({

topic: z.string(),

sections: z.array(z.custom<SectionsSchema>()),

completedSections: new ReducedValue(

z.array(z.string()).default(() => []),

{ reducer: (a, b) => a.concat(b) }

),

finalReport: z.string(),

});

// Worker state

const WorkerState = new StateSchema({

section: z.custom<SectionsSchema>(),

completedSections: new ReducedValue(

z.array(z.string()).default(() => []),

{ reducer: (a, b) => a.concat(b) }

),

});

// Nodes

const orchestrator: GraphNode<typeof State> = async (state) => {

// Generate queries

const reportSections = await planner.invoke([

{ role: "system", content: "Generate a plan for the report." },

{ role: "user", content: `Here is the report topic: ${state.topic}` },

]);

return { sections: reportSections.sections };

};

const llmCall: GraphNode<typeof WorkerState> = async (state) => {

// Generate section

const section = await llm.invoke([

{

role: "system",

content: "Write a report section following the provided name and description. Include no preamble for each section. Use markdown formatting.",

},

{

role: "user",

content: `Here is the section name: ${state.section.name} and description: ${state.section.description}`,

},

]);

// Write the updated section to completed sections

return { completedSections: [section.content] };

};

const synthesizer: GraphNode<typeof State> = async (state) => {

// List of completed sections

const completedSections = state.completedSections;

// Format completed section to str to use as context for final sections

const completedReportSections = completedSections.join("\n\n---\n\n");

return { finalReport: completedReportSections };

};

// Conditional edge function to create llm_call workers that each write a section of the report

const assignWorkers: ConditionalEdgeRouter<typeof State, "llmCall"> = (state) => {

// Kick off section writing in parallel via Send() API

return state.sections.map((section) =>

new Send("llmCall", { section })

);

};

// Build workflow

const orchestratorWorker = new StateGraph(State)

.addNode("orchestrator", orchestrator)

.addNode("llmCall", llmCall)

.addNode("synthesizer", synthesizer)

.addEdge("__start__", "orchestrator")

.addConditionalEdges(

"orchestrator",

assignWorkers,

["llmCall"]

)

.addEdge("llmCall", "synthesizer")

.addEdge("synthesizer", "__end__")

.compile();

// Invoke

const state = await orchestratorWorker.invoke({

topic: "Create a report on LLM scaling laws"

});

console.log(state.finalReport);

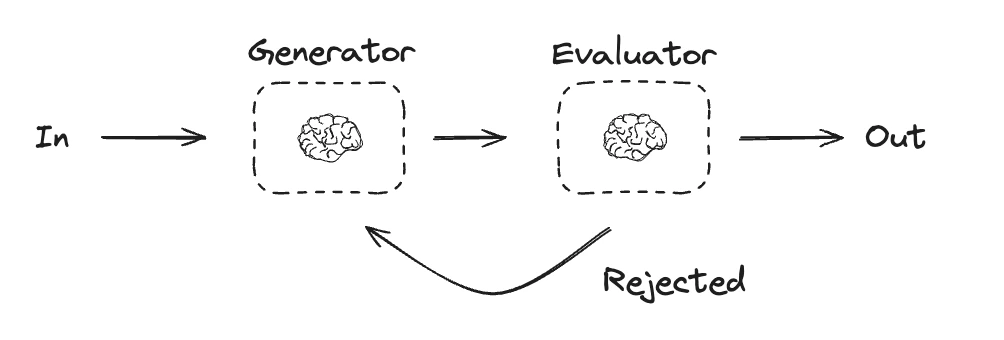

评估器 - 优化器

在评估器 - 优化器工作流中,一个 LLM 调用创建响应,另一个评估该响应。如果评估器或 人工介入 确定响应需要改进,则提供反馈并重新创建响应。此循环持续进行,直到生成可接受的响应。 当任务有特定的成功标准但需要迭代才能满足时,通常使用评估器 - 优化器工作流。例如,在两种语言之间翻译文本时,并不总是能完美匹配。可能需要几次迭代才能生成在两种语言中具有相同含义的翻译。

import { StateGraph, StateSchema, GraphNode, ConditionalEdgeRouter } from "@langchain/langgraph";

import * as z from "zod";

// Graph state

const State = new StateSchema({

joke: z.string(),

topic: z.string(),

feedback: z.string(),

funnyOrNot: z.string(),

});

// Schema for structured output to use in evaluation

const feedbackSchema = z.object({

grade: z.enum(["funny", "not funny"]).describe(

"Decide if the joke is funny or not."

),

feedback: z.string().describe(

"If the joke is not funny, provide feedback on how to improve it."

),

});

// Augment the LLM with schema for structured output

const evaluator = llm.withStructuredOutput(feedbackSchema);

// Nodes

const llmCallGenerator: GraphNode<typeof State> = async (state) => {

// LLM generates a joke

let msg;

if (state.feedback) {

msg = await llm.invoke(

`Write a joke about ${state.topic} but take into account the feedback: ${state.feedback}`

);

} else {

msg = await llm.invoke(`Write a joke about ${state.topic}`);

}

return { joke: msg.content };

};

const llmCallEvaluator: GraphNode<typeof State> = async (state) => {

// LLM evaluates the joke

const grade = await evaluator.invoke(`Grade the joke ${state.joke}`);

return { funnyOrNot: grade.grade, feedback: grade.feedback };

};

// Conditional edge function to route back to joke generator or end based upon feedback from the evaluator

const routeJoke: ConditionalEdgeRouter<typeof State, "llmCallGenerator"> = (state) => {

// Route back to joke generator or end based upon feedback from the evaluator

if (state.funnyOrNot === "funny") {

return "Accepted";

} else {

return "Rejected + Feedback";

}

};

// Build workflow

const optimizerWorkflow = new StateGraph(State)

.addNode("llmCallGenerator", llmCallGenerator)

.addNode("llmCallEvaluator", llmCallEvaluator)

.addEdge("__start__", "llmCallGenerator")

.addEdge("llmCallGenerator", "llmCallEvaluator")

.addConditionalEdges(

"llmCallEvaluator",

routeJoke,

{

// Name returned by routeJoke : Name of next node to visit

"Accepted": "__end__",

"Rejected + Feedback": "llmCallGenerator",

}

)

.compile();

// Invoke

const state = await optimizerWorkflow.invoke({ topic: "Cats" });

console.log(state.joke);

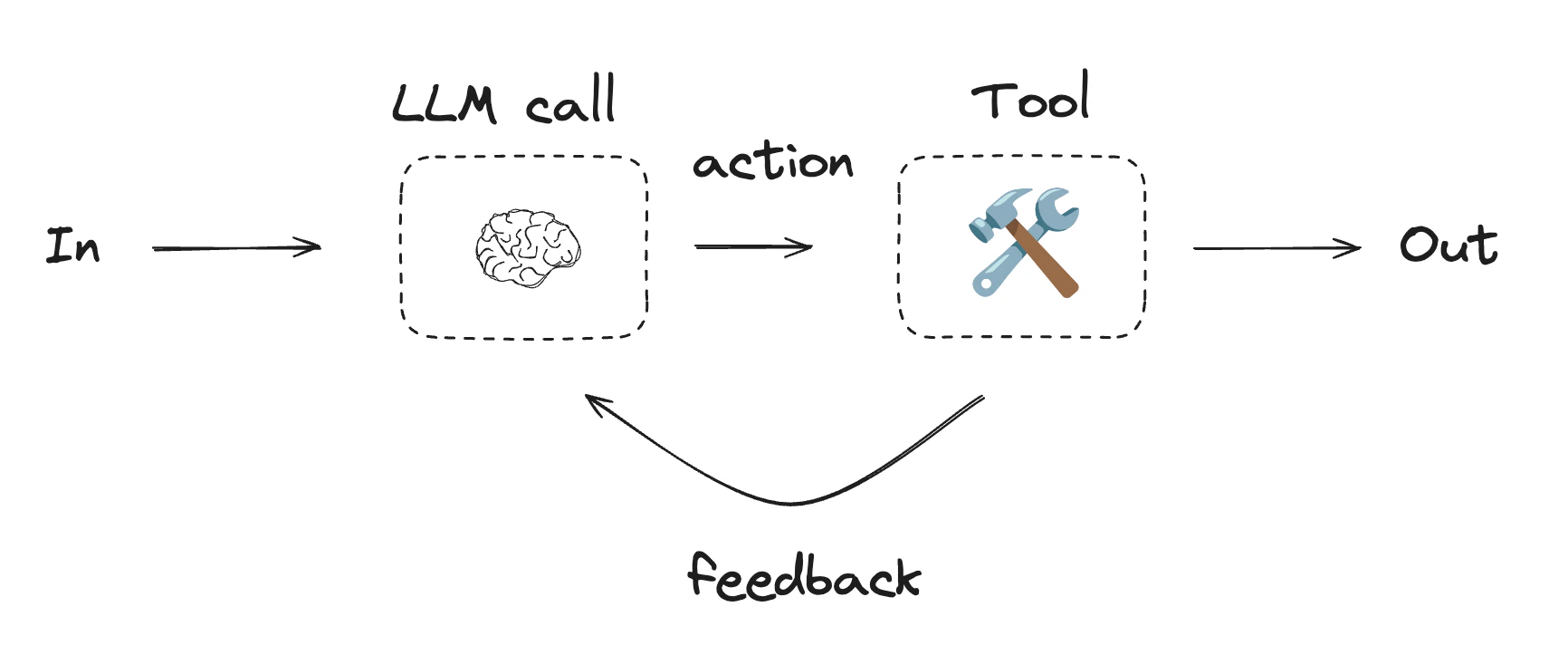

智能体

智能体通常实现为使用 工具 执行操作的 LLM。它们在连续反馈循环中运行,用于问题和解决方案不可预测的情况。智能体比工作流拥有更多自主权,可以决定使用哪些工具以及如何解决问题。您仍然可以定义可用的工具集以及智能体行为的指导方针。

Using tools

import { tool } from "@langchain/core/tools";

import * as z from "zod";

// Define tools

const multiply = tool(

({ a, b }) => {

return a * b;

},

{

name: "multiply",

description: "Multiply two numbers together",

schema: z.object({

a: z.number().describe("first number"),

b: z.number().describe("second number"),

}),

}

);

const add = tool(

({ a, b }) => {

return a + b;

},

{

name: "add",

description: "Add two numbers together",

schema: z.object({

a: z.number().describe("first number"),

b: z.number().describe("second number"),

}),

}

);

const divide = tool(

({ a, b }) => {

return a / b;

},

{

name: "divide",

description: "Divide two numbers",

schema: z.object({

a: z.number().describe("first number"),

b: z.number().describe("second number"),

}),

}

);

// Augment the LLM with tools

const tools = [add, multiply, divide];

const toolsByName = Object.fromEntries(tools.map((tool) => [tool.name, tool]));

const llmWithTools = llm.bindTools(tools);

import { StateGraph, StateSchema, MessagesValue, GraphNode, ConditionalEdgeRouter } from "@langchain/langgraph";

import { ToolNode } from "@langchain/langgraph/prebuilt";

import {

SystemMessage,

ToolMessage

} from "@langchain/core/messages";

// Graph state

const State = new StateSchema({

messages: MessagesValue,

});

// Nodes

const llmCall: GraphNode<typeof State> = async (state) => {

// LLM decides whether to call a tool or not

const result = await llmWithTools.invoke([

{

role: "system",

content: "You are a helpful assistant tasked with performing arithmetic on a set of inputs."

},

...state.messages

]);

return {

messages: [result]

};

};

const toolNode = new ToolNode(tools);

// Conditional edge function to route to the tool node or end

const shouldContinue: ConditionalEdgeRouter<typeof State, "toolNode"> = (state) => {

const messages = state.messages;

const lastMessage = messages.at(-1);

// If the LLM makes a tool call, then perform an action

if (lastMessage?.tool_calls?.length) {

return "toolNode";

}

// Otherwise, we stop (reply to the user)

return "__end__";

};

// Build workflow

const agentBuilder = new StateGraph(State)

.addNode("llmCall", llmCall)

.addNode("toolNode", toolNode)

// Add edges to connect nodes

.addEdge("__start__", "llmCall")

.addConditionalEdges(

"llmCall",

shouldContinue,

["toolNode", "__end__"]

)

.addEdge("toolNode", "llmCall")

.compile();

// Invoke

const messages = [{

role: "user",

content: "Add 3 and 4."

}];

const result = await agentBuilder.invoke({ messages });

console.log(result.messages);

Connect these docs to Claude, VSCode, and more via MCP for real-time answers.